The moment I stopped chasing cheap

I needed to build a feature for one of my SaaS products. A compliance workflow that parses regulatory frameworks, maps requirements against a customer’s existing policies, and generates a gap analysis. Not trivial, but well-defined.

I started with a free model. It got the data structure wrong. I reprompted. It missed edge cases in the mapping logic. I reprompted again. Ten iterations later, the output was still breaking in ways that took longer to debug than to just write the code myself.

I switched to a model that costs roughly 50x more per token. It nailed the task in one shot. Correct structure, accurate mapping, clean output.

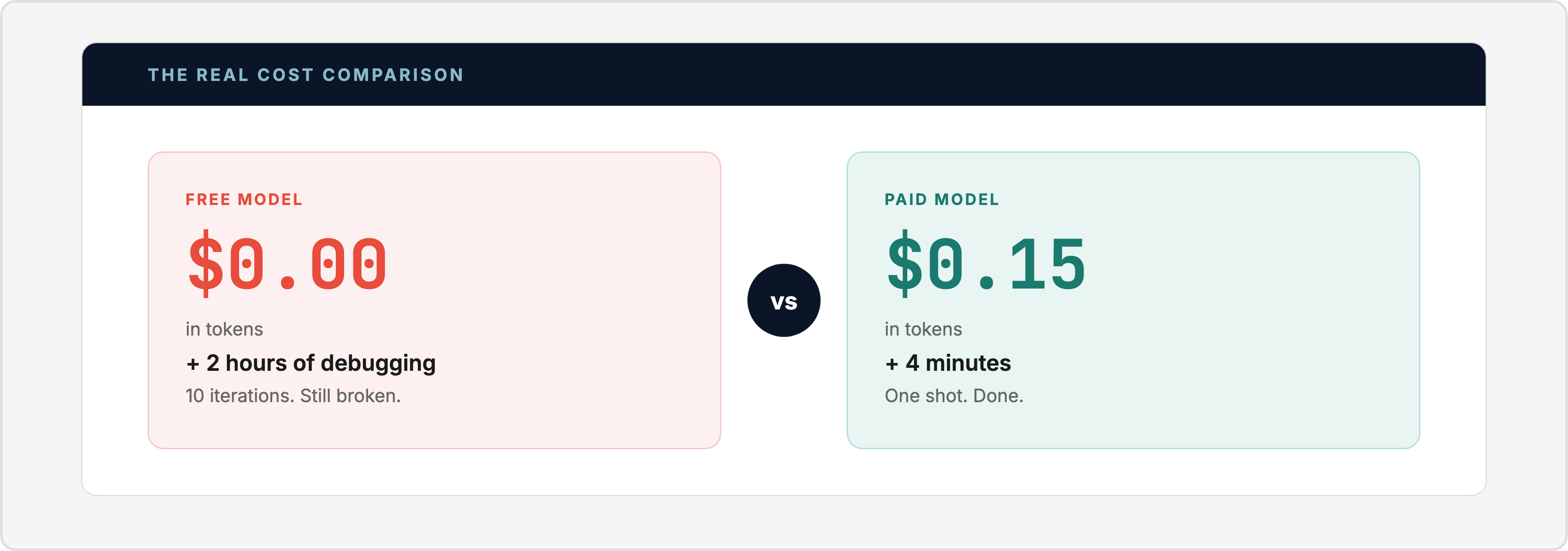

The “expensive” model cost me about $0.15 in API tokens and four minutes of my time. The “free” model cost me $0 in tokens and two hours of my evening. The free model was the expensive one.

That moment rewired how I think about AI costs.

The mental model most people get wrong

When people ask “how much does it cost to run AI agents?”, they’re thinking about token prices. Dollars per million tokens. Subscription fees. API bills.

That’s like asking “how much does a contractor cost?” and only looking at their hourly rate. The contractor who charges $150/hour and finishes in two hours costs less than the one who charges $40/hour and takes three weeks.

A free model that takes 10 attempts to get right costs more than a paid model that gets it right the first time. Not in tokens. In the only resource you can’t buy more of.

I’ve been running a multi-agent AI system for about three months now. Nine specialized agents handling everything from email triage and market research to building full SaaS products, generating websites, drafting content, and managing infrastructure. In that time, I’ve launched two products (a B2B CRM and a compliance platform), shipped three websites, written a dozen articles, and automated most of my daily operations. The most important lesson wasn’t about which models are cheapest. It was about which tasks need quality and which don’t.

The 80/20 split nobody talks about

Here’s what I found after testing models across every price tier on real tasks, not benchmarks.

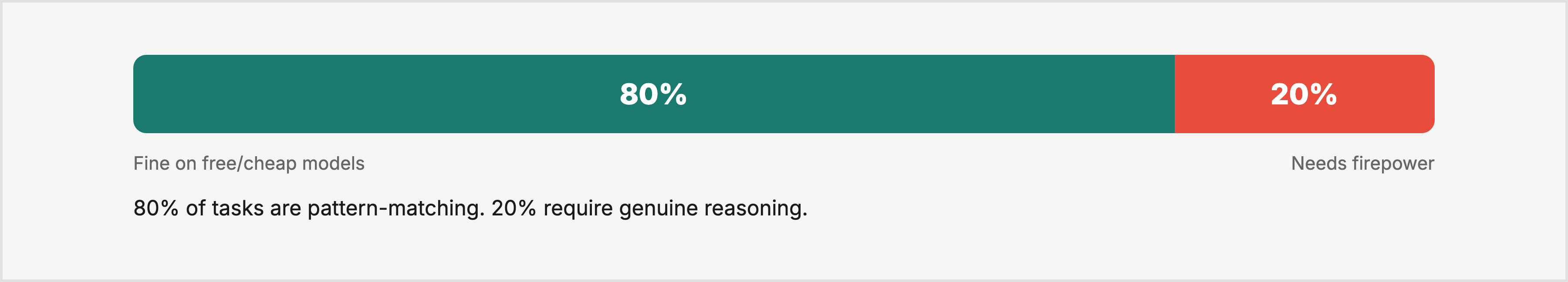

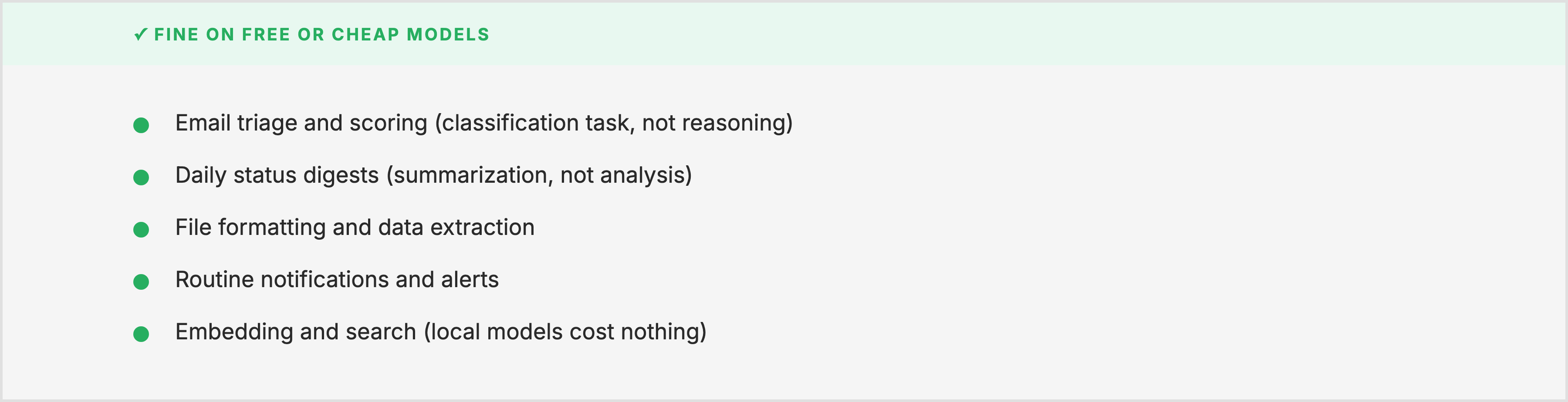

80% of agent tasks don’t need an expensive model. Email classification. File organization. Data formatting. Status summaries. Extracting structured information from unstructured text. These are pattern-matching tasks. A free model handles them fine because the task doesn’t require deep reasoning. It requires following instructions consistently.

20% of tasks will eat your entire week if you cheap out. Resume tailoring against a specific job description. Writing that requires judgment, not just fluency. Code generation for anything beyond boilerplate. Architecture decisions. Competitive analysis that requires connecting non-obvious dots. These tasks need a model that can hold complex context, reason about tradeoffs, and get it right the first time.

The mistake isn’t using free models. The mistake is using free models for everything.

Here’s what the split looks like from my actual fleet:

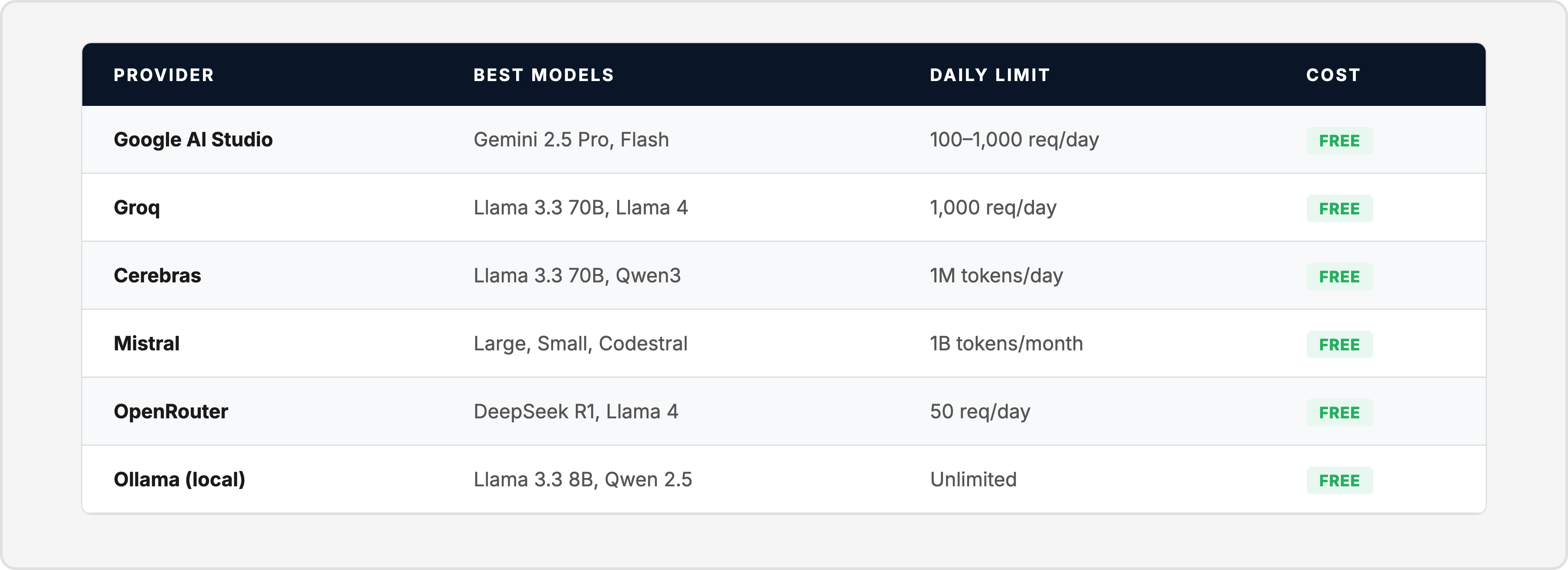

What’s actually free right now

The free tier landscape in 2026 is genuinely impressive. You don’t need a credit card for any of these:

These aren’t toy models. A single agent running on Google AI Studio’s free tier, triggered by a cron job twice an hour, can process 100 tasks per day without spending a cent.

Where to start (one agent, one task, one evening)

Don’t build a fleet. Build one agent that does one useful thing.

Pick your most repetitive daily task. For me, it was email triage. For you it might be summarizing meeting notes, scanning job boards, organizing files, or generating weekly reports.

Write a script that calls a free API with a prompt, processes the result, and saves it to a file. Trigger it with a cron job. That’s it. No framework. No orchestration layer. No dashboard.

Add a second agent when the first one proves its value. Connect them through files: agent 1 writes output to a directory, agent 2 reads from it. That file handoff is your first taste of what a “team” feels like. Two specialized functions, passing work between them, without you in the middle.

The signal to upgrade

Stay on free models until you notice this pattern: you’re spending more time fixing the model’s output than doing the work yourself.

When debugging the agent takes longer than the task it automates, the free model just got expensive. That’s the signal to upgrade. Not before.

For me, the upgrade was worth it when I hit tasks that required genuine reasoning, not pattern matching. Resume tailoring. Code that needed to work the first time. Analysis that connected dots across multiple documents. Those tasks on a free model weren’t slower. They were broken.

The honest cost breakdown of my current setup: the infrastructure agents (email, file management, status updates) could run entirely on free tiers. The strategy and building agents need paid models. My total spend is real, but 80% of the system’s value comes from tasks that don’t require it.

Stop optimizing for cheap. Start optimizing for done.

The question isn’t “what’s the cheapest way to run AI?” The question is “what’s the fastest way to get this task done correctly?”

Sometimes that’s a free model. Sometimes it costs $0.15 in API tokens. Rarely is it the most expensive option. But chasing $0 as the goal, instead of treating it as a starting point, will cost you the thing that actually matters.

Your time.

Start with one agent. Use a free API. Solve one real problem. When the free model starts costing you hours instead of saving them, spend the fifteen cents.

That’s the whole framework. I’m learning more every week, and the next article in this series will go deeper into what I’d build differently if I started over today.